With the ChatGPTsuccessor

GPT-4 is currently making OpenAI a hot topic again. Colleague Jan Stahnke has already taken a look at how the model is to be used. Here we take a little more look behind the scenes for you.

In the following we want to explain to you what technical innovation is behind GPT-4. We took a very close look at the rather cryptic “Technical Report” and explain what OpenAI reveals about GPT-4 on 98 pages.

How does GPT-4 work?

Basically, GPT-4 works just like ChatGPT. It learns to predict text, much like the suggestion feature on your phone’s keyboard.

It’s a lot smarter, of course, and uses a neural network – a jumble of numbers roughly modeled on synapses in the human brain that are “trained” for a task.

Using billions of text modules, the network is taught to predict the next word in a sentence as precisely as possible. Small example: “Skyrim was released in the year” is probably followed by “2011”.

In order to make it a smart assistant and not just a better typing aid, the network was then fine-tuned to user questions and answers – in the process it learns in a relatively short time to use its acquired knowledge of word chains to answer questions as well as possible.

The future is more than just text

The so-called multimodality is new to GPT-4: GPT-4 can also process images in addition to text!

What sounds unspectacular at first allows completely new applications. The official video from OpenAI shows a roughly pre-scribbled website and the instruction to generate code from it, whereupon GPT-4 diligently produces executable code.

It can also be used to explain memes or solve guessing games.

For example, GPT-4 can also recognize humor in images and describes, for example, that this image is funny because it seems absurd to connect a bulky, old VGA connection to a modern smartphone. Image Source: https://www.reddit.com/r/hmmm/comments/ubab5v/hmmm/

OpenAI keeps the details covered. Presumably, however, there is a neural network behind it that has learned to combine images.

It would be conceivable, for example, that images with descriptive texts were used for training GPT-4. GPT-4 then learned to complete these descriptions using the image.

In practice it could have looked something like this: GPT-4 learns that in the description text a picture one

the word Pyramid

follows if one is detected in the image.

Better Call GPT-4

: AI as a lawyer, psychologist and wine connoisseur

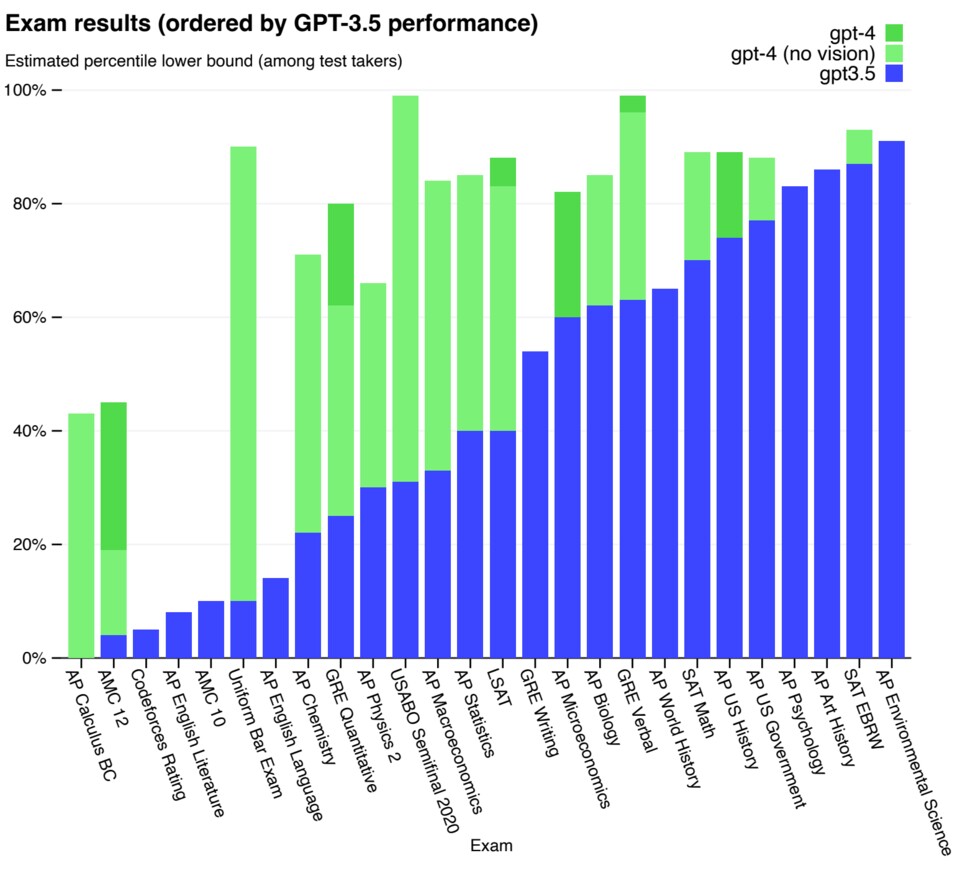

The result is quite impressive. OpenAI has paid special attention to various tests, from psychology to mathematics. In these, GPT-4 performs much better than the ChatGPT base.

In the “bar examination”, a test for admission to the bar in the USA, GPT-4 no longer ranks among the bottom 10 percent of participants, but among the top 10 percent. The AI would be, at least according to this test, a good lawyer.

Results of GPT-4 in various tests, such as the bar exam or for college admissions. Source: OpenAI

Some of the better test results compared to its predecessor can be explained by the fact that GPT-4 now also understands images that belong to the tasks set. But even without images, GPT-4 is better than its predecessor.

This becomes apparent when replacing the images with text descriptions. Although the results can be improved, they still fall far short of a natural interplay of text and image.

Viewing images and text together, rather than laboriously translating the former into speech, works best. A strong argument that artificial intelligence also learns better when it understands the world itself, instead of being given pre-chewed bits of information.

Bigger, better, stronger?

In animals, a large brain relative to the rest of the body is a strong indicator of intelligence. The situation is apparently similar with GPT-4.

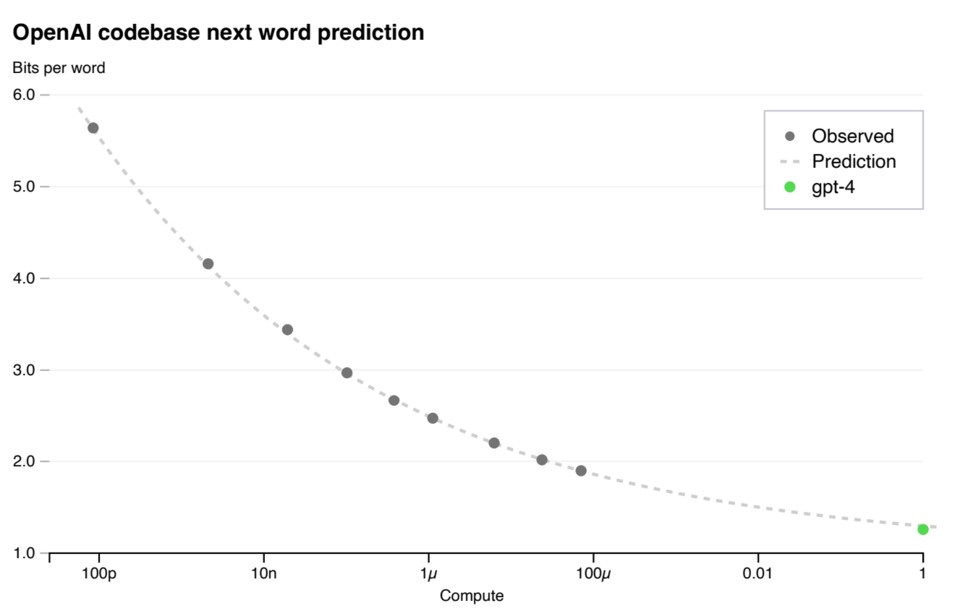

With more parameters, i.e. more artificial connections between the neurons of GPT-4, the performance of the neural network also increases. However, only in terms of the ability to correctly guess the next word fragment.

This is an important indication of the ability to understand a text. Of course, a mistake that is only half as big does not have to be automatic twice as smart

mean.

Still, experiments with different major GPT-4 variants show that more is indeed better:

More neurons in the network pretty much provide the expected performance gains. Source: OpenAI

Nothing is known about the exact number of parameters. However, ChatGPT already uses about 175 billion parameters. With normal 16-bit floating-point numbers, a model there already occupies several hundred gigabytes of memory.

Predicting a single word fragment by sending a sentence through the neural network becomes incredibly time-consuming. And GPT-4 is unlikely to settle for fewer parameters than its predecessor.

With better and better hardware, more parameters could become possible. Even without further optimizations, the next versions of GPT can become stronger.

The dark side and the problematic handling of it

Although GPT-4 has made some security and factual advances over ChatGPT, misinformation still occurs.

For example, in our tests, GPT-4 insisted that Reinhold Messner had lost his fingers while climbing a mountain in the Himalayas – and not, as everyone knows, some of his toes.

An effective “fact check” is missing, as is a way for GPT-4 to access up-to-date content, although this has already been successfully demonstrated in other publications. Likewise, GPT-4 can’t really learn anything outside of a conversation flow.

The problematic handling: OpenAI’s products will become more and more secretive as they mature.

Where previous publications detailed mathematical descriptions, “recipes” for training, and strategies, the 98 pages of GPT-4 read more like a mixture of advertising and self-adulation.

This is apparently not least due to the money that OpenAI sensed from Microsoft’s $10 billion investment. And this is exactly where the lack of transparency has a double impact: Without knowing exactly how GPT-4 works, things like fluctuating truth content can be classified more poorly.

In our opinion, OpenAI no longer does justice to the “Open” in the name.

outlook

For all the criticism of the lack of scientific transparency, there are also some good things about the future of GPT-4.

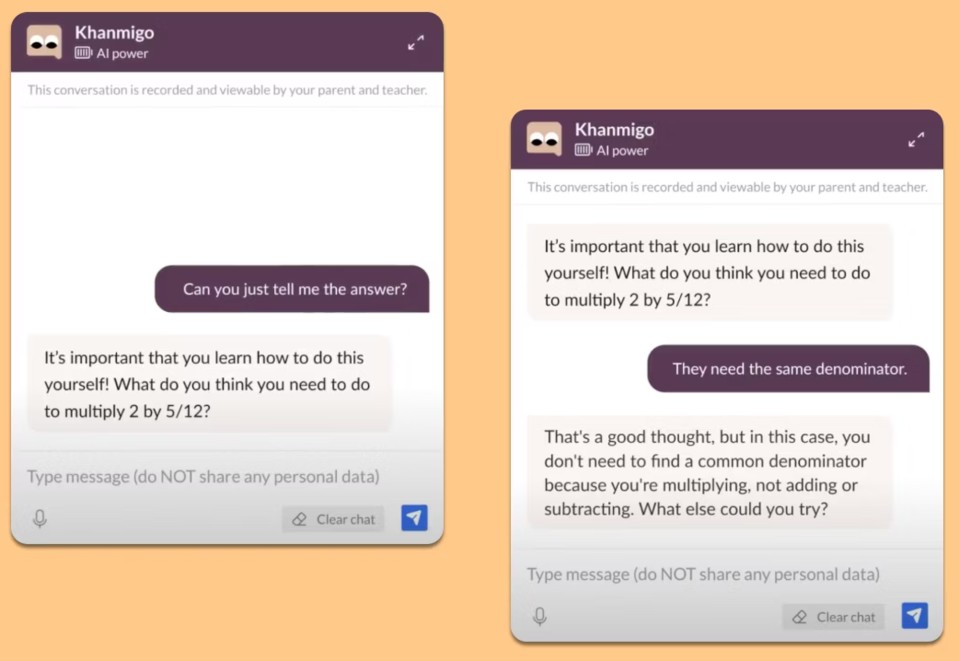

OpenAI is now working with the educational organization Khan Academy and developing an interactive teacher. Likewise, the enormous capacities of GPT-4 will soon be used to assist the blind.

GPT soon as a teacher at Khan Academy. Source: https://openai.com/customer-stories/khan-academy

Exactly these applications are what is actually exciting about GPT-4: Being able to understand a new type of user input creates completely new possibilities.

And even if GPT-4 can “only” work with images for the time being, it shows that steps in this direction are worthwhile so that artificial intelligence understands more of our world.

We’ll tell you here why you should still write your homework yourself, given all the options that GPT-4 offers you – the same principle as for ChatGPT also applies to GPT-4:

Now it’s your turn: What do you think of the progress OpenAI is making with GPT-4? Would you have wished for more transparency from the company? And in which areas can the new understanding of AI still be used? We look forward to your opinions, comments and ideas in the comments!

The SPARC fusion reactor is the infrastructure of the future for the AI era

The SPARC fusion reactor is the infrastructure of the future for the AI era Galaxy Tab S10 Lite is really cheap right now

Galaxy Tab S10 Lite is really cheap right now Why I use a fan in winter and why I especially rely on this model!

Why I use a fan in winter and why I especially rely on this model! Spotify is increasing its prices again in the USA – what that means for us

Spotify is increasing its prices again in the USA – what that means for us Dead Cells Studio surprises with a roguelite insider tip

Dead Cells Studio surprises with a roguelite insider tip